手册

- Unity 用户手册 2020.3 (LTS)

- New in Unity 2020 LTS

- 包

- 已验证包

- 2D Animation

- 2D Pixel Perfect

- 2D PSD Importer

- 2D SpriteShape

- Adaptive Performance

- Addressables

- Advertisement

- Alembic

- Analytics Library

- Android Logcat

- Animation Rigging

- AR Foundation

- ARCore XR Plugin

- ARKit Face Tracking

- ARKit XR Plugin

- Burst

- Cinemachine

- Code Coverage

- Core RP Library

- Editor Coroutines

- FBX Exporter

- High Definition RP

- In App Purchasing

- Input System

- iOS 14 广告支持

- JetBrains Rider 编辑器

- Magic Leap XR Plugin

- ML Agents

- Mobile Notifications

- Multiplayer HLAPI

- Oculus XR Plugin

- OpenXR 插件

- Polybrush

- Post Processing

- ProBuilder

- Profile Analyzer

- Quick Search

- Recorder

- Remote Config

- Scriptable Build Pipeline

- Shader Graph

- Test Framework

- TextMeshPro

- 时间轴

- Unity Distribution Portal

- Universal RP

- 版本控制

- Visual Effect Graph

- Visual Studio Code 编辑器

- Visual Studio 编辑器

- WebGL Publisher

- Windows XR Plugin

- Xiaomi SDK

- XR Plugin Management

- 预览包

- 核心包

- 内置包

- AI

- Android JNI

- 动画

- Asset Bundle

- Audio

- 布料

- Director

- Image Conversion

- IMGUI

- JSONSerialize

- Particle System

- 物理 (Physics)

- Physics 2D

- Screen Capture

- Terrain

- Terrain Physics

- Tilemap

- UI

- UIElements

- Umbra

- Unity Analytics

- Unity Web Request

- Unity Web Request Asset Bundle

- Unity Web Request Audio

- Unity Web Request Texture

- Unity Web Request WWW

- Vehicles

- Video

- VR

- Wind

- XR

- 按关键字排列的包

- Unity 的 Package Manager

- 创建自定义包

- 已验证包

- 在 Unity 中操作

- 安装 Unity

- 升级 Unity

- Unity 的界面

- 创建游戏玩法

- 编辑器功能

- 分析

- Asset Workflow

- 输入

- 2D

- 图形

- 渲染管线

- 摄像机

- 后期处理

- 光照

- 支持的模型文件格式

- 网格

- 纹理

- 着色器

- Shaders core concepts

- 内置着色器

- 标准着色器

- 标准粒子着色器

- Autodesk Interactive 着色器

- 旧版着色器

- 内置着色器的用途和性能

- 普通着色器系列

- 透明着色器系列

- 透明镂空着色器系列

- 自发光着色器系列

- 反光着色器系列

- 反射顶点光照 (Reflective Vertex-Lit)

- 反光漫射 (Reflective Diffuse)

- 反光镜面反射 (Reflective Specular)

- 反光凹凸漫射 (Reflective Bumped Diffuse)

- 反光凹凸镜面反射 (Reflective Bumped Specular)

- 反光视差漫射 (Reflective Parallax Diffuse)

- 反光视差镜面反射 (Reflective Parallax Specular)

- 反光法线贴图无光照 (Reflective Normal Mapped Unlit)

- 反光法线贴图顶点光照 (Reflective Normal mapped Vertex-lit)

- 使用 Shader Graph

- 编写着色器

- 编写着色器概述

- ShaderLab

- ShaderLab:定义 Shader 对象

- ShaderLab:定义子着色器

- ShaderLab:定义一个通道

- ShaderLab:添加着色器程序

- ShaderLab:命令

- ShaderLab:使用 Category 代码块对命令进行分组

- ShaderLab 命令:AlphaToMask

- ShaderLab 命令:Blend

- ShaderLab 命令:BlendOp

- ShaderLab 命令:ColorMask

- ShaderLab 命令:Conservative

- ShaderLab 命令:Cull

- ShaderLab 命令:Offset

- ShaderLab 命令:模板

- ShaderLab 命令:UsePass

- ShaderLab 命令:GrabPass

- ShaderLab 命令:ZClip

- ShaderLab 命令:ZTest

- ShaderLab 命令:ZWrite

- ShaderLab 旧版功能

- Unity 中的 HLSL

- Unity 中的 GLSL

- Example shaders

- 编写表面着色器

- 为不同的图形 API 编写着色器

- 着色器性能和性能分析

- 材质

- 粒子系统

- 选择粒子系统解决方案

- 内置粒子系统

- 使用内置粒子系统

- 粒子系统顶点流和标准着色器支持

- 粒子系统 GPU 实例化

- 粒子系统 C# 作业系统集成

- 组件和模块

- 粒子系统 (Particle System)

- 粒子系统模块

- 粒子系统 (Particle System) 主模块

- Emission 模块

- Shape 模块

- Velocity over Lifetime 模块

- Noise 模块

- Limit Velocity Over Lifetime 模块

- Inherit Velocity 模块

- Lifetime by Emitter Speed

- Force Over Lifetime 模块

- Color Over Lifetime 模块

- Color By Speed 模块

- Size over Lifetime 模块

- Size by Speed 模块

- Rotation Over Lifetime 模块

- Rotation By Speed 模块

- External Forces 模块

- Collision 模块

- Triggers 模块

- Sub Emitters 模块

- Texture Sheet Animation 模块

- Lights 模块

- Trails 模块

- Custom Data 模块

- Renderer 模块

- 粒子系统力场 (Particle System Force Field)

- 内置粒子系统示例

- Visual Effect Graph

- 创建环境

- 天空

- 视觉效果组件

- 高级渲染功能

- 优化图形性能

- Color

- 物理系统

- 脚本

- 多玩家和联网

- 音频

- 视频概述

- 动画

- 创建用户界面 (UI)

- 导航和寻路

- Unity 服务

- Setting up your project for Unity services

- Unity Organizations

- Unity Ads

- Unity Analytics

- Unity Cloud Build

- Automated Build Generation

- 支持的平台

- 支持的 Unity 版本

- 共享链接

- 版本控制系统

- 使用 Unity 开发者控制面板 (Developer Dashboard) 对 Unity Cloud Build 进行 Git 配置

- 使用 Unity 开发者控制面板 (Developer Dashboard) 对 Unity Cloud Build 进行 Mercurial 配置

- 将 Apache Subversion (SVN) 用于 Unity Cloud Build

- 使用 Unity 开发者控制面板 (Developer Dashboard) 对 Unity Cloud Build 进行 Perforce 配置

- 使用 Unity 开发者控制面板 (Developer Dashboard) 对 Unity Cloud Build 进行 Plastic 配置

- 发布到 iOS

- 高级选项

- 在 Unity Cloud Build 中使用可寻址资源

- 编译清单

- 计划构建

- Cloud Build REST API

- Unity Cloud Content Delivery

- Unity IAP

- Setting up Unity IAP

- 跨平台指南

- 应用商店指南

- 实现应用商店

- Unity Collaborate

- Setting up Unity Collaborate

- Adding team members to your Unity project

- 查看历史记录

- Enabling Cloud Build with Collaborate

- 管理 Unity Editor 版本

- Reverting files

- Resolving file conflicts

- 排除资源使其不发布到 Collaborate

- 将单个文件发布到 Collaborate

- 还原项目至以前的版本

- 进行中 (In-Progress) 编辑通知

- 管理云存储

- 将项目移动到另一个版本控制系统

- Unity Accelerator

- Collaborate troubleshooting tips

- Unity Cloud Diagnostics

- Unity Integrations

- Multiplayer 服务

- Unity 分发平台

- XR

- 开源代码仓库

- Unity Asset Store

- 平台开发

- 将“Unity 用作库”用于其他应用程序

- 启用深层链接

- 独立平台

- macOS

- Apple TV

- WebGL

- iOS

- Android

- Windows

- 将 Unity 集成到 Windows 和 UWP 应用程序中

- Windows 通用

- 通用 Windows 平台

- 已知问题

- 旧版主题

- 术语表

- Unity 用户手册 2020.3 (LTS)

- 音频

- 原生音频插件 SDK

- 空间音响 SDK

空间音响 SDK

The audio spatializer SDK provides controls to change the way your application transmits audio from an audio source into the surrounding space. It is an extension of the native audio plugin SDK.

The built-in panning of audio sources is a simple form of spatialization. It takes the source and regulates the gains of the left and right ear contributions based on the distance and angle between the Audio Listener and the Audio Source. This provides simple directional cues for the player on the horizontal plane.

The Unity Audio Spatializer SDK and example implementation

To provide flexibility and support for working with audio spatialization, Unity has an open interface, the Audio Spatializer SDK, as an extension on top of the Native Audio Plugin SDK. You can replace the standard panner in Unity with a more advanced one, and give it access to important meta-data about the source and listener needed for the computation.

For an example of a native spatializer audio plugin, see the Unity Native Audio Plugin SDK. The plugin only supports a direct Head-Related Transfer Function (HRTF), and is intended for example purposes only.

You can use a simple reverb, included in the plugin, to route audio data from the spatializer plugin to the reverb plugin. The HRTF filtering is based on a modified version of the KEMAR data set. For more information on the KEMAR data set, see the MIT Media Lab’s documentation and measurement files.

If you would like to explore a data set obtained from a human subject, refer to IRCAM’s data sets.

Initializing the Unity Audio Spatializer

Unity applies spatialization effects directly after the Audio Source decodes audio data. This produces a stream of audio data in which each source has its own separate effect instance. Unity only processes the audio from that source with its corresponding effect instance.

To enable a plugin to operate as a spatializer, you need to set a flag in the description bit-field of the effect:

definition.flags |= UnityAudioEffectDefinitionFlags_IsSpatializer;

If you set the UnityAudioEffectDefinitionFlags_IsSpatializer flag, Unity recognizes the plugin as a spatializer during the plugin scanning phase. When Unity creates an instance of the plugin, it allocates the UnityAudioSpatializerData structure for the spatializerdata member of the UnityAudioEffectState structure.

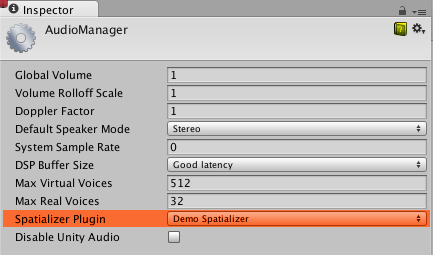

To use the spatializer in a project, select it in your Project settings (menu: Edit > Project Settings > Audio):

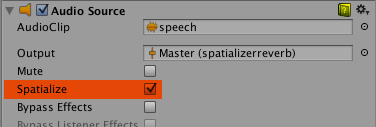

Then, in the Inspector window for an Audio Source that you want to use with the spatializer plugin, enable Spatialize:

You can also enable the spatializer for an Audio Source through a C# script, using the AudioSource.spatialize property.

In an application with a lot of sounds, you might want to only enable the spatializer on your nearby sounds, and use traditional panning on the distant ones, to reduce the CPU load on the mixing thread for the spatializer effects.

If you want Unity to pass spatial data to an audio mixer plugin that is not a spatializer, you can use the following flag in the description bit-field:

definition.flags |= UnityAudioEffectDefinitionFlags_NeedsSpatializerData;

If a plugin initializes with the UnityAudioEffectDefinitionFlags_NeedsSpatializerData flag, the plugin receives the UnityAudioSpatializerData structure, but only the listenermatrix field is valid. For more information about UnityAudioSpatializerData, see the Spatializer effect meta-data section.

To stop Unity from applying distance-attenuation on behalf of a spatializer plugin, use the following flag:

definition.flags |= UnityAudioEffectDefinitionFlags_AppliesDistanceAttenuation;

The UnityAudioEffectDefinitionFlags_AppliesDistanceAttenuation flag indicates to Unity that the spatializer handles the application of distance-attenuation. For more information on distance-attenuation, see the Attenuation curves and audibility section.

Spatializer effect meta-data

Unlike other Unity audio effects that run on a mixture of sounds, Unity applies spatializers directly after the Audio Source decodes audio data. Each instance of the spatializer effect has its own instance of UnityAudioSpatializerData, mainly associated with data about the Audio Source.

struct UnityAudioSpatializerData

{

float listenermatrix[16]; // Matrix that transforms sourcepos into the local space of the listener

float sourcematrix[16]; // Transform matrix of the Audio Source

float spatialblend; // Distance-controlled spatial blend

float reverbzonemix; // Reverb zone mix level parameter (and curve) on

// the Audio Source

float spread; // Spread parameter of the Audio Source (0..360 degrees)

float stereopan; // Stereo panning parameter of the Audio Source (-1: fully left, 1: fully right)

// The spatializer plugin may override the distance attenuation to

// influence the voice prioritization (leave this callback as NULL

// to use the built-in Audio Source attenuation curve)

UnityAudioEffect_DistanceAttenuationCallback distanceattenuationcallback;

float minDistance; // The minimum distance of the Audio Source.

// This value may be useful for determining when to apply near-field effects.

float maxDistance; // The maximum distance of the Audio Source, or the

// distance where the audio becomes inaudible to the listener.

};

The structure contains fields corresponding to the properties of the Audio Source component in the Inspector: Spatial Blend, Reverb Zone Mix, Spread, Stereo Pan, Minimum Distance, and Maximum Distance.

The UnityAudioSpatializerData structure contains the full 4x4 transform matrices for the Audio Listener and Audio Source. The listener matrix is inverted so that you can multiply the two matrices to get a relative direction-vector. The listener matrix is always orthonormal, so you can quickly calculate the inverse matrix.

Unity’s audio system only provides the raw source sound as a stereo signal. The signal is stereo even when the source is mono or multi-channel, and Unity uses up- or down-mixing, as required.

矩阵协议

The sourcematrix field contains a copy of the Audio Source’s transformation matrix. For a default Audio Source on a GameObject that is not rotated, the matrix is a translation matrix where the position is encoded in elements 12, 13 and 14.

The listenermatrix field contains the inverse of the AudioListener’s transform matrix.

You can determine the direction vector from the AudioListener to the Audio Source as shown below, where L is the listenermatrix and S is the sourcematrix:

float dir_x = L[0] * S[12] + L[4] * S[13] + L[ 8] * S[14] + L[12];

float dir_y = L[1] * S[12] + L[5] * S[13] + L[ 9] * S[14] + L[13];

float dir_z = L[2] * S[12] + L[6] * S[13] + L[10] * S[14] + L[14];

The position in (L[12], L[13], L[14]) is actually the negative value of what you see in Unity’s Inspector window for the camera matrix. If the camera had also been rotated, you would also have to undo the effect of the rotation first. To invert a Transformation-Rotation matrix, transpose the top-left 3x3 rotation matrix of L, and calculate the positions as shown below:

float listenerpos_x = -(L[0] * L[12] + L[ 1] * L[13] + L[ 2] * L[14]);

float listenerpos_y = -(L[4] * L[12] + L[ 5] * L[13] + L[ 6] * L[14]);

float listenerpos_z = -(L[8] * L[12] + L[ 9] * L[13] + L[10] * L[14]);

For an example in the code for the Audio Spatializer plugin, see line 215 in the Plugin_Spatializer.cpp file.

Attenuation curves and audibility

Unless you specify the UnityAudioEffectDefinitionFlags_AppliesDistanceAttenuation flag, as specified in the Initializing the Unity Audio Spatializer section, the Unity audio system still controls the distance-attenuation. Unity applies distance-attenuation to the sound before it enters the spatialization stage, and allows the audio system to know the approximate audibility of the source. The audio system uses approximate audibility for dynamic virtualization of sounds based on importance to match the user-defined Max Real Voices limit.

Unity does not retrieve audibility information from actual signal level measurements, but uses the combination of the values that it reads from the distance-controlled attenuation curve, the Volume property, and the mixer’s applied attenuations.

You can directly override the attenuation curve, or you can use the value calculated by the Audio Source’s curve as a base for modification. To override or modify the value, use the callback in the UnityAudioSpatializerData structure as shown below:

typedef UNITY_AUDIODSP_RESULT (UNITY_AUDIODSP_CALLBACK* UnityAudioEffect_DistanceAttenuationCallback)(

UnityAudioEffectState* state,

float distanceIn,

float attenuationIn,

float* attenuationOut);

You can also use a simple custom logarithmic curve, as shown below:

UNITY_AUDIODSP_RESULT UNITY_AUDIODSP_CALLBACK SimpleLogAttenuation(

UnityAudioEffectState* state,

float distanceIn,

float attenuationIn,

float* attenuationOut)

{

const float rollOffScale = 1.0f; // 类似于音频项目设置

*attenuationOut = 1.0f / max(1.0f, rollOffScale * distanceIn);

return UNITY_AUDIODSP_OK;

}

Using C# scripts from the Unity API

There are two methods on the Audio Source that allow setting and getting parameters from the spatializer effect: SetSpatializerFloat and GetSpatializerFloat. These methods work similarly to the SetFloatParameter and GetFloatParameter methods in the generic native audio plugin interface. However, SetSpatializerFloat and GetSpatializerFloat take an index to the parameter they must set or read, while SetFloatParameter and GetFloatParameter refer to the parameters by name.

The boolean property AudioSource.spatializer is linked to the Spatialize option in Unity’s Inspector window for an Audio Source. The property controls how Unity instantiates and deallocates the spatializer effect, based on the selected plugin in your Audio Project Settings.

If instantiation of your spatializer effect is very resource-intensive, in terms of memory or other resources in your project, it might be effective to allocate your spatialization effects from a preset “pool,” so that Unity does not need to create a new instance of the spatializer every time you need to use it. If you keep your Unity plugin interface bindings very lightweight and dynamically allocate your audio effects, you can avoid frame drops or other performance issues in your project.

示例插件的已知限制

Due to the fast convolution algorithm, quick movements cause some zipper artifacts, which you can remove through the use of overlap-save convolution or cross-fading buffers.

The code also does not support tilting the listener’s head, whether the listener is directly attached to the player character, or a camera located elsewhere.